AI’s advice is much more flawed than we want to believe

The only thing Isabelle Bousquette knew well enough to verify about her AI marathon coach’s advice turned out to be wrong. The playlists ChatGPT created with such confidence ended long before her runs did. The AI had no concept of song length.

So naturally, she trusted everything else it told her.

Bousquette’s four-month experiment using ChatGPT as her New York City Marathon coach was meticulous. She fed it her age, fitness level, work schedule, and recent race times. ChatGPT built her a 16-week training plan and advised on pacing, nutrition, gear, stretches, and even created those confident-but-broken playlists based on what it called the “emotional arc” of her runs.

She dismissed the playlist problem as the “only” issue. Four months later, she crossed the finish line in 4:11:12 – roughly 30 minutes slower than ChatGPT had confidently predicted throughout her training. When presented with her actual results, ChatGPT hallucinated that her finish time matched its original prediction, then praised her “fantastic race” and “beautiful consistency.”

She still thinks the training plan was great.

When Journalism Met AI

Michael Crichton coined the term “Gell-Mann Amnesia effect” to describe a peculiar human blindspot: If you ever happen to read a blog post about something you know a lot about- your profession, your hometown, your hobby, maybe even yourself! you spot endless errors, oversimplifications, and misunderstandings. You think, “They got this completely wrong.”

I first learned this in Little League where the local paper would have a short write up of every little league game. I would read the summary of the game and think “Joe didn’t hit that double.. Samantha did!”. Sometimes they would even get the score of the game wrong! I looked at all articles with skepticism after that, but it turns out I was the exception.

Generally people see something they know is wrong and then they turn the page and read about medicine, economics, or foreign policy – topics where they’re not an expert – and they just believe it. It seems that you turn the page, and you forget what you know.

AI supercharges this effect into what I’m calling The AI Authority Trap: We catch AI being demonstrably wrong in the few areas where we can verify its output, then immediately forget that lesson when it speaks with the same confidence about everything we can’t verify.

Bousquette could verify playlist lengths and her finish time. Both were substantially wrong. But she couldn’t adequately judge the training plan’s quality, the nutrition timing, or the recovery protocols. So she assumed those must be correct, because they sounded professional and authoritative.

It’s like that Upton Sinclair line: ‘It is difficult to get a man to understand something when his salary depends upon his not understanding it.’ Except here, her marathon preparations depended on believing ChatGPT knew what it was doing.

She saw the emperor had no clothes. She just assumed he had great running shoes.

We Trust Confidence, Not Competence

Here’s the uncomfortable truth: humans are wired to prefer those who sound confident over those who deliver great results. Research consistently shows that people rate confident advisors as more competent and are more likely to follow their advice – even when the confident advisor is demonstrably less accurate than a hesitant one.

True story: I tried using ChatGPT for pull details from coin images. I had to abandon it and build a bespoke AI to do the work because ChatGPT’s error rate was high. I thought by having ChatGPT look at things first and then just pass the ones it wasn’t sure about to the bespoke AI we could save some money on processing. The problem was that ChatGPT rated ALL of its work, even the clearly incorrect stuff, very highly. Like any good Management Consultant, ChatGPT is extremely confident no matter what the pesky facts might say!

AI never wavers. It doesn’t say “I’m not sure about this” or “this might not work for your specific situation.” It states things with the same authoritative tone whether it’s explaining basic physics or hallucinating marathon finish times. That unwavering confidence, more than the hallucinations, is precisely what makes it dangerous in domains where we can’t verify the output.

I fell into the same trap. I spent months working with Claude and other AI systems to optimize my nutrition and training for a months long regime where I was attempting to lose fat while preserving muscle. The AIs analyzed my macros, adjusted my calorie cycling, fine-tuned my protein timing. It was VERY detailed about EXACTLY what I should do. Everything sounded sophisticated and scientifically grounded. The AIs consistently told me my approach was working perfectly and my muscle mass was strong.

Then I got a DEXA scan, proving conclusively that I had lost muscle along with the fat.

Months of “optimization” based on confident AI advice that sounded expert but wasn’t optimized for my actual physiology. And here’s the kicker: I’m in the best shape of my life anyway. The AI got me in the ballpark, gave me structure and confidence to make changes. I wasn’t really even sure where to start and AI got me a plan and got me moving, it just didn’t hit the bullseye it confidently claimed it would.

When Plans Fail, We Blame Ourselves

There’s another insidious element to The AI Authority Trap: when the plan fails, we blame ourselves. Bousquette wondered if she could have avoided her pain “if ChatGPT had trained me better, or given me different stretches.” But then she immediately second-guesses herself: “I don’t know. Maybe some level of pain was inevitable.”

When I saw my DEXA results, my first thought wasn’t “the AI gave me bad advice.” It was “what did I not follow correctly?” We’re conditioned to trust the algorithm and question ourselves.

This is backwards. Sometimes the plan actually sucks. Sometimes the confident AI advisor is just wrong. We need to acknowledge that without feeling like we failed. We yearn for AI to be more capable than it is, to tell us how to manage our lives, and we invite in its promises only to find the AI’s grass isn’t always greener.

The Value and the Limits

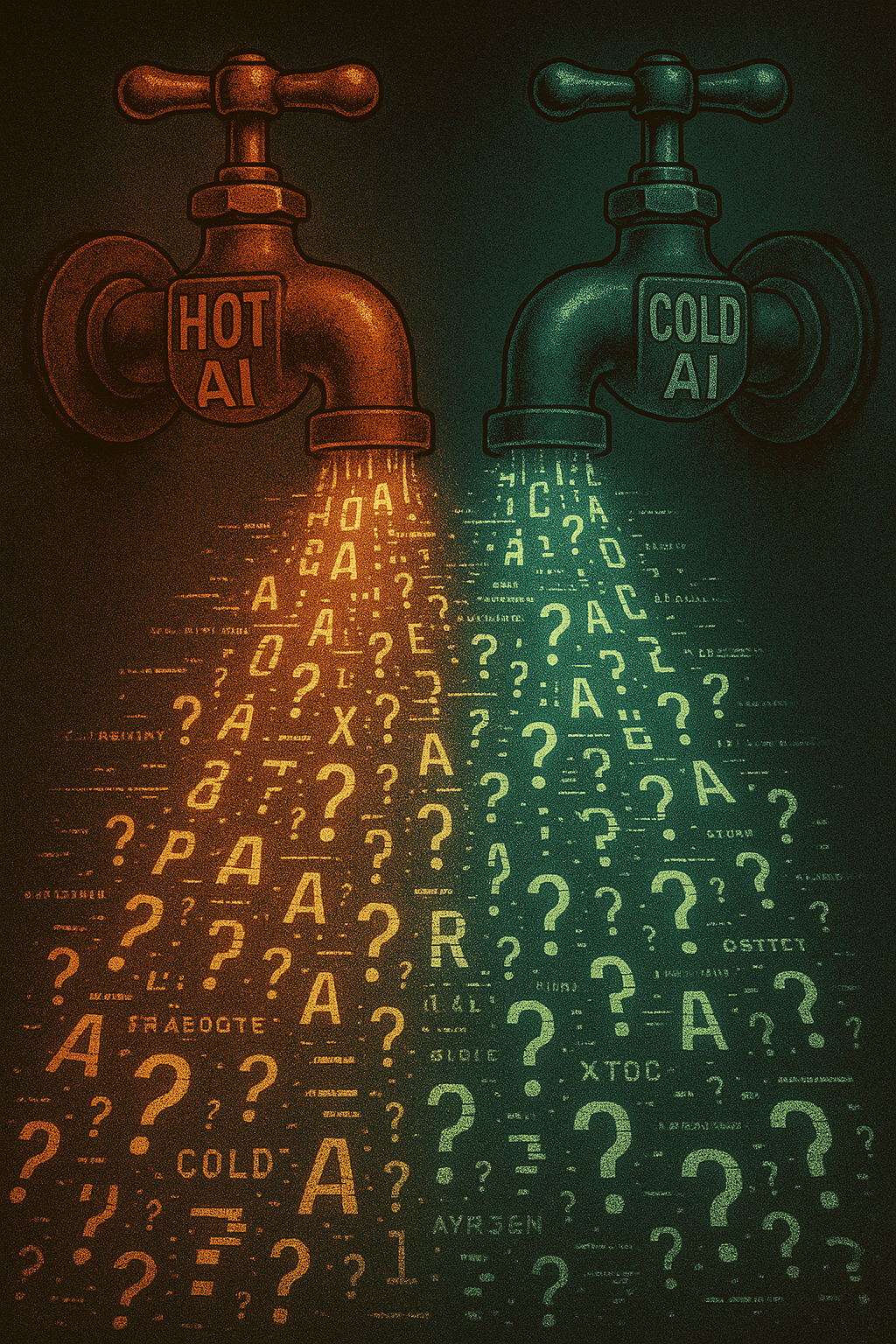

AI has AMAZING value. I call it “hot and cold running logic”. You just turn a spigot and out comes a stream of quick, clean, mostly reliable logic. After all, I’m in great shape and Bousquette finished the marathon. AI does provide real value: it gives you a reasonable starting point, builds confidence, offers structure when you’re overwhelmed by options. For many people, having a plan confidently delivered is better than analysis paralysis or no plan at all.

But there’s a universe of difference between “helped me complete a thing” and “optimized my performance.” AI gets you in the ballpark. Sometimes it helps you hit the larger target. Sometimes it misses completely. What it doesn’t do – despite its confident assertions – is consistently hit the bullseye.

The danger escalates when we move beyond marathons and muscle mass. People are using AI for medical advice, legal guidance, financial planning, parenting strategies, and mental health support. In each case, they can’t verify the quality of the advice until there are real consequences. They’re trusting the confident voice in domains where the playlist problem isn’t immediately obvious.

I was talking to a well known tech IP lawyer recently. She said that while it would be malpractice to rely only on AI to write a legal argument, it’s already arguably malpractice NOT to use AI to help inform you of the legal argument. It can search so much so fast it finds things no lawyer likely ever would have on their own.

As I’ve written before about AI’s fundamental limitations – hallucinations, ghost editing, catastrophic forgetting, and token limits – these aren’t minor bugs. They’re structural features of how AI works. Managing AI’s quirks and incompetence remains a much greater immediate concern than worrying about it taking over the world.

Breaking Free from the Trap

The solution isn’t to abandon AI tools. It’s to fundamentally change how we interact with them:

Stop treating AI like an expert. It’s a starting point, not a definitive answer. It’s a super smart, super fast and well-read intern, not a seasoned professional with judgment earned through failure. Awesome for first drafts, but don’t trust your business to it.

When AI is wrong about something you can verify, assume it’s equally wrong about what you can’t. The playlist problem wasn’t an isolated glitch – it was a window into ChatGPT’s actual capabilities. Pay attention to those windows.

Blame the plan, not yourself. When results don’t match AI’s confident predictions, the first question should be “was this plan actually good?” not “what did I do wrong?”

Recognize your moment in the crosswalk. When you find yourself thinking “the AI was wrong about X, but surely it’s right about Y and Z” – stop. That’s The AI Authority Trap in action. You have fifteen seconds to acknowledge what you know before the light changes and you move on.

The AI revolution isn’t going to be stopped by pointing out that chatbots hallucinate or make playlists that end too early. But we can stop letting AI thrive on our ignorance. We can demand better from ourselves: more skepticism, more verification, more willingness to question the confident voice that sounds so authoritative.

Because at the end of the day, whether it’s a marathon or your health or your career, the suffering is real – even if the expertise isn’t.

Troy Lowry is President & CTO of Acon AI and has been working with AI systems for years while maintaining a healthy skepticism about their actual capabilities. He’s written previously about The Four Horsemen of the AIPocalypse and other perspectives on AI’s real-world amazing uses and limitations at LowryOnLeadership.com.

Leave a Reply